- Previous message: Sinnathurai Srivas: "Re: minimizing size (was Re: allocation of Georgian letters)"

- In reply to: James Kass: "Re: minimizing size (was Re: allocation of Georgian letters)"

- Next in thread: Jeroen Ruigrok van der Werven: "Localized software (was: Re: minimizing size)"

- Reply: Jeroen Ruigrok van der Werven: "Localized software (was: Re: minimizing size)"

- Messages sorted by: [ date ] [ thread ] [ subject ] [ author ] [ attachment ]

- Mail actions: [ respond to this message ] [ mail a new topic ]

On Feb 8, 2008, at 1:46 PM, James Kass wrote:

>

> John H. Jenkins replied,

>

>>> 2/

>>> My question was, mostly all proper publishing softwares do not

>>> yet support complex rendering. How many years since Unicode come

>>> into being?

>>> When is this going to be resolved, or do we plan on choosing an

>>> alternative encoding as Unicode is not working.

>>>

>>

>> Well, what applications are you thinking of and on what platforms?

>> As I say, Word on Windows is fine for almost everything in

>> Unicode, and Pages on Mac OS X is fine for all of it. It is

>> resolved now in that sense.

>

> http://www.trigeminal.com/samples/provincial.html

>

> From Michael Kaplan's page, "Anyone Can Be Provincial", I scraped four

> script examples into a plain-text editor:

>

> அவர்கள் ஏன் தமிழில்

> பேசக்கூடாது ?

> რატომ არ ლაპარაკობენ

> ისინი ქართულად?

> Ինչու՞ նրանք չեն խոսում Հայերեն

> なぜ、みんな日本語を話してくれないのか?

Well, at least for the examples you cite, the situation is better on

Mac OS X. I did the same thing using two popular Mac text editors.

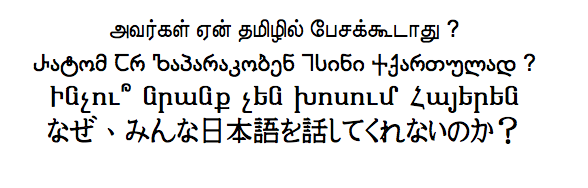

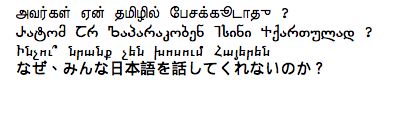

TextEdit yields:

BBEdit results in:

Cutting and pasting from the PDF back into the text editor screwed up

the Tamil, but the others came through OK (albeit with extraneous line

returns).

And to be fair, Tom Gewecke pointed out to me privately that Pages

doesn't do bidi correctly, whereas Mellel does.

>

> It's this lack of support for complex scripts (and, by extension,

> Unicode) in popular publishing applications which is so distressing

> to users.

I'm in total agreement with you here, James. The big factor is

economics, as Ed points out. Supporting the non-complex rendering

bits of Unicode is relatively cheap and gets you the biggest markets:

the Americas, Europe, and East Asia. The cost-benefit ratio is much

worse when it comes to supporting bidi and complex scripts, so

companies cut corners by not supporting them.

The big OS companies can help by providing text engines like Uniscribe

or CoreText, but not everybody sees fit to use them. (Not even in the

companies themselves.)

I can remember, however, the days when companies had to spend millions

to rewrite their software to work with Japanese or Chinese. As a

result, those markets tended to have marginal support. Unicode has

improved that situation immensely. And without Unicode, companies

would *still* not be supporting South Asian languages, because they

would still have to rewrite their software to do it and it just

wouldn't be worth the cost.

Even if Unicode had used an encoding model for South Asian scripts

that didn't require complex rendering, the current problem would exist

because then text would display correctly but, for example, databases

would have to be substantially rewritten to convert the glyph stream

back into a series of letters for the operations that they typically

support.

=====

John H. Jenkins

[email protected]

- Next message: Sinnathurai Srivas: "Re: minimizing size (was Re: allocation of Georgian letters)"

- Previous message: Sinnathurai Srivas: "Re: minimizing size (was Re: allocation of Georgian letters)"

- In reply to: James Kass: "Re: minimizing size (was Re: allocation of Georgian letters)"

- Next in thread: Jeroen Ruigrok van der Werven: "Localized software (was: Re: minimizing size)"

- Reply: Jeroen Ruigrok van der Werven: "Localized software (was: Re: minimizing size)"

- Messages sorted by: [ date ] [ thread ] [ subject ] [ author ] [ attachment ]

- Mail actions: [ respond to this message ] [ mail a new topic ]

This archive was generated by hypermail 2.1.5 : Fri Feb 08 2008 - 15:24:30 CST