- Previous message: Andrew West: "Re: Variation Selectors"

- In reply to: Kent Karlsson: "RE: Representative glyphs for combining kannada signs"

- Next in thread: Doug Ewell: "Re: Representative glyphs for combining kannada signs"

- Reply: Doug Ewell: "Re: Representative glyphs for combining kannada signs"

- Reply: Philippe Verdy: "Re: Representative glyphs for combining kannada signs"

- Reply: Kent Karlsson: "RE: Representative glyphs for combining kannada signs"

- Messages sorted by: [ date ] [ thread ] [ subject ] [ author ] [ attachment ]

- Mail actions: [ respond to this message ] [ mail a new topic ]

Kent Karlsson wrote:

> Antoine Leca wrote:

>> Are you intending to say that one SHOULD (IS REQUIRED TO)

>> register in the codepoints the use of 2-dot-like Umlaut in a

>> different way from 2-stroke-like Umlaut?

>

> Yes, and they already are. U+0308 COMBINING DIAERESIS vs. U+030B

> COMBINING DOUBLE ACUTE. There is no "umlaut" character...

I did use Umlaut to clearly (at least I thought) denote the characteristic

German *feature*, NOT the codepoints. I beg your pardon to not have been

more explicit.

>> Are you intending to say that if I wrote "Mme" (Mrs in

>> French), I should differentiate, in a not yet standardised

>> way, the fact that I write it with superscript characters

>> or not? Saying it is a "spelling" difference?

>

> Definitely. In this particular case one may debate whether to use

> markup or to (ab)use U+1D50 MODIFIER LETTER SMALL M and

> U+1D49 MODIFIER LETTER SMALL E.

Put it in clear: to write the French equivalent of Mrs, I can:

- either write the slightly incorrect Mme

- or write the more "correct" M[][] (where [] represent the empty box that

everybody except four cats will effectively see).

Somewhere I am thinking this is *not* a working solution.

> And m² is not at all the same as m2.

I guess no, although I am not completely sure (particularly since I expect

the second to read "m<SUP>2</SUP>" instead, of course, which is the same

underlying codepoints, just presented in a different way.)

But assuming it, the latter is often used when the author intend the former.

And it could be conceived to modify the text to show the latter. In fact,

several user IU do exactly that.

>> So, if the original encoder does NOT make a distinction in

>> meaning between the two forms, why would Unicode require

>> him to encode this difference at codepoint level?

>

> How do you know if the "original encoder" makes the difference or

> not?

Because *I* am the original encoder, in this stanza. :-)

Because, in the Mme (or m2) case, the normal way to put it is to type the

keys [shift] [m] [m] [e] [space], stop typing, select the second m and the

e, execute a command which makes them superscripted (and which, according to

your point of view above, should modify the underlying codepoints to be 1D50

and 1D49), then return typing the full name...

Because I expect to find "Mme", even if "me" is superscripted;

_particularly_ if the Find dialog box does not offers me the possibility to

specify that some letters are superscripted....

>> when they are viewed as different.

>

> Again, how do you know they are not.

Because my feeling (in fact, my interpretation of the Unicode and ISCII

description) is that the Indic codepoints are abstract characters, not those

elements which combine in defined ways to produce some glyphic intermediate

elements, which only remains to be actually drawn by the font, as it seems

you are thinking.

I base that view, first on the fact that the virama concept forces a need

for some abstraction layer (reordering, combination, so-called backstore,

etc.) which is absent even from Thai, and even more from Western scripts;

and secondly because of the underlying nature of the Brahmi-derived scripts,

with the sounds associated, the sandhi phenomena, etc.

I consider that Indic character encodings are still in the infancy, we do

not have the accumulated experience we have with Latin encodings, with

several tries and errors; ISCII was a radical departure from the traditional

model, and Unicode did quickly follow in that way, before the dust settled

over the learnings of the ISCII experiences (in fact, even before the ink

were put on the copies of the final ISCII standard... but that's another

story.)

Furthermore, Unicode (following ISCII, but amplifying it, and we do not see

an end to this move) did define several modifiers whose purpose is to

fine-specify the rendering when it is not clear enough, or when the author

is supposed to add some precision; this is much like the character styles

used in Western typography (rendered as HTML spanning styles, for example).

However, when the ultimate purpose is to convey an idea (not a fixed

rendering form), and to assure the broadest interoperability, Unicode does

NOT propose additional modifier; which I take as an indication (in addition

to the preambles of the Standard) that _none_ are needed.

>> But it should be optional (and supplementary), not mandatory.

>

> I have a really hard time understanding why apparent spell changes

> should be mediated by fonts changes for Indic scripts. It is not the

> done that way for any other scripts

Huh? If I want a rounded 'a' in Latin, I am required to select a font with

such a design. Similar for a z or a J with descender, or a low-striked q. I

do not expect to be forced to use the "alternative" codepoints, that have

been added for special purposes, like U+0251 or U+0292, for an illustrative

use where I do NOT want to add specific meaning.

The difference here is that you are saying changing a z-shaped 'a' to a

rounded one (etc.) is *not* a spelling change, while writing the i matra in

one or other place *is*. My wild guess is that some Indians may see it

exactly reversed...

And I know the line is NOT easy to draw.

>>>> Example 2, Malayalam: dead RA can come either before the

>>>> (last part of the) consonant, or below it.

>>>

>>> A spelling difference that should be recorded in the sequence

>>> of characters (in some, not yet standardised, way), quite apart

>>> from font issues.

>>

>> The difference is between two rendering styles, which are

>

> "rendering style" should not be confused with "spelling".

> If they are different in any other way than **purely aesthetic**

> (line thicknesses, embellishments (like swashes and serifs),

> roundness, with, boldness, inclination, and such),

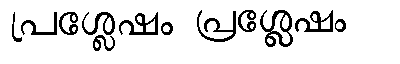

It is certainly such a difference (not purely aesthetic, I mean). See

attached image. It is the same word (പ്രശ്ലേഷം, the name for U+0D3D if

not-English characters were accepted ;-)). And of course, I used the same

encoding to produce both images.

> it's a spelling difference.

> (Even differences that are purely aesthetic for plain

> text may be significant in non-plaintext texts, e.g. for emphasis.)

Should emphasis be recorded as different Unicode codepoints?

My reading was it should not...

> Major glyph differences are spelling differences.

Sorry, but you did not define major, so it is a bit difficult to follow. I

understand you discarded line thickness or obliqueness or serifs, that is,

everything which is commonly associated in Europe with typographic styles.

Is the wide variety of glyphs available for U+0026 a 'major' difference? Or

the differences which used to be noted in the Unicode tables, e.g. for

U+0901, U+090B, U+091D, U+0923, U+0936, to name a few?

> I'm not advocating banning either form. As I mentioned just above

> and should be clear also from my original mail, quite the opposite.

You may be missing something here. Nowadays, and since 1990, there is ONE

encoding for Malayalam. Not two, neither zero, just one. There is no

acknowledgement I can find (from Unicode or for that instance from anyone in

the West) that the difference of rendering are spelling differences

(justifying specific codepoints).

The best I can find is the acknowledgement (in the Indic OpenType

specifications) that there is a need to distinguish two genuinely different

"styles" in Uniscribe and related, one named "old style" encoded MAL as

"language system", the other "reformed" encoded MLR.

As a result, Malayalees began to encode their script with the available

codepoints. Both "camps" picked the codepoints according to their views; and

they discovered problems in implementing it (particularly the cillus). The

problem were reported (for example here around 1997-98, by Jeroen

Hellingman.) It tooks some times for the Westerners to understand that when

they (the Malayalees) were presenting their arguments, what some called a

'cat' was not viewed as a cat by everybody. Even (or particularly) if they

used a Malayalam word for cat! (പൂച്ച?)

Now, if you consider those two "spellings" should be encoded independantly,

and differently, it will actually be a great news for Malayalees, since it

means that what they see as (their) Malayalam is not 'correct';

and if furthermore, you are saying "yes, but how it should be encoded is

still indeterminate" (= to be delayed), it effectively prevents definitive

documents to be encoded, from either camp.

Particularly since nothing can prevent the "solution" to be some sort of

Salomon's judgment, taking some bits of 'correctness' from each camp, thus

requiring each camp to modify (recode) the current, already existing, texts

in other areas, to fit the two-spelling model.

Antoine

- Next message: Doug Ewell: "Re: Representative glyphs for combining kannada signs"

- Previous message: Andrew West: "Re: Variation Selectors"

- In reply to: Kent Karlsson: "RE: Representative glyphs for combining kannada signs"

- Next in thread: Doug Ewell: "Re: Representative glyphs for combining kannada signs"

- Reply: Doug Ewell: "Re: Representative glyphs for combining kannada signs"

- Reply: Philippe Verdy: "Re: Representative glyphs for combining kannada signs"

- Reply: Kent Karlsson: "RE: Representative glyphs for combining kannada signs"

- Messages sorted by: [ date ] [ thread ] [ subject ] [ author ] [ attachment ]

- Mail actions: [ respond to this message ] [ mail a new topic ]

This archive was generated by hypermail 2.1.5 : Mon Mar 27 2006 - 09:06:11 CST